Multi-Model Benchmark: Round 1 Results | ArangoDB Blog

It’s time for another update of my NoSQL performance blog series. This hopefully concludes the first part of this series with the initial databases ArangoDB, MongoDB, Neo4J and OrientDB and I can now start to check out other databases. I’m getting a lot of requests to test others as well and I’ll try to add them as soon as possible. Pull requests to my repository are also more than welcome. Remember it is all open-source.

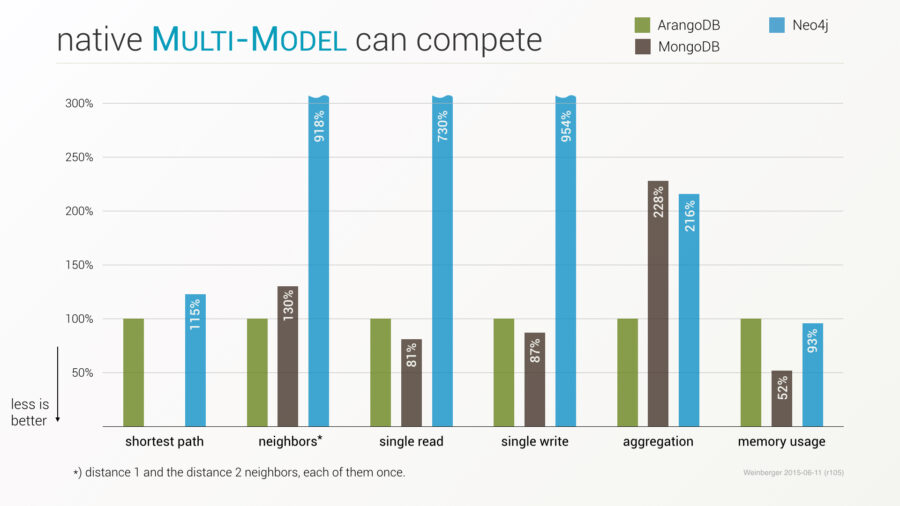

The first set of benchmarks was started as a proof that multi-model can compete with specialized solutions and I started with the corresponding top dogs (Neo4J and MongoDB) for graphs and documents. After the first blog post, we were asked by the community to include OrientDB as the other multi-model database, too, which makes sense and therefore I expanded the initial lineup.

Concluding the tests did take a bit longer than expected, because vendors took up the challenge and improved their products with impressive results – as we asked them to do. Still, for each iteration we needed some time to run all tests, see below. However, on the upside, everyone can benefit from the improvements, which is an awesome by-product of the benchmark tests. (more…)

The Great AQL Shootout: ArangoDB 2.5 vs 2.6 Comparison

For the ArangoDB 2.6 release from last week we’ve put some performance tests together. The tests will compare the AQL query execution times in 2.5 and 2.6.

The results look quite promising: 2.6 outperformed 2.5 for all tested queries, mostly by factors of 2 to 5. A few dedicated AQL features in the tests got boosted even more, resulting in query execution time reductions of 90 % and more. Finally, the tests also revealed a dedicated case for which 2.6 provides a several hundredfold speedup.

Also good news is that not a single of the test queries ran slower in 2.6 than in 2.5.

Improving Databases: Open Source Competitive Benchmark

TL;DR: Our initial benchmark has raised a lot of interest. Initially we wanted to show that multi-model can compete with other solutions. Due to the open and competitive way we have conducted the benchmark, the discussions around it have lead to improvements in all products, better algorithms, faster drivers and better ways to use the databases.

General Setup

From the outset we published all code and data and asked the vendors of all tested products as well as the general public, not only to run the tests on their own machines, but also to suggest improvements in the data models, test code, database configuration, driver usage and server configuration. This lead to a lively discussion, lots of pull requests and even to the release of improved versions of the database products themselves!

This process exceeded all our expectations and is yet another great example of community collaboration not only for fact finding but also for product improvements. Obviously, the same benchmark code will always show slightly different results when run on different hardware, operating systems, network setups and with more or less RAM. Therefore, a reliable result of a benchmark can essentially only be achieved by allowing everybody to run it on their own machines.

The technical setup is described in the above blog post. Let me briefly repeat the key facts.

Speeding Up Array Operations: ArangoDB Performance Tips

Last week some further optimization slipped into 2.6. The optimization can provide significant speedups in AQL queries using huge array/object bind parameters and passing them into V8-based functions.

It started with an ArangoDB user reporting a specific query to run unexpectedly slow. The part of the query that caused the problem was simple and looked like this:

FOR doc IN collection

FILTER doc.attribute == @value

RETURN TRANSLATE(doc.from, translations, 0)

In the original query, translations was a big, constant object literal. Think of something like the following, but with a lot more values:

{

"p1" : 1,

"p2" : 2,

"p3" : 40,

"p4" : 9,

"p5" : 12

}

The translations were used for replacing an attribute value in existing documents with a lookup table computed outside the AQL query.

The number of values in the translations object was varying from query to query, with no upper bound on the number of values. It was possible that the query was running with 50,000 lookup values in the translations object.

Performance Comparison: ArangoDB vs MongoDB, Neo4j, OrientDB

My recent blog post “Native multi-model can compete” has sparked considerable interest on HN and other channels. As expected, the community has immediately suggested improvements to the published code base and I have already published updated results several times (special thanks go to Hans-Peter Grahsl, Aseem Kishore, Chris Vest and Michael Hunger).

Please note: An update is available (June ’15) and a new performance test with PostgreSQL added.

Here are the latest figures and diagrams:

The aim of the exercise was to show that a multi-model database can successfully compete with special players on their own turf with respect to performance and memory consumption. Therefore it is not surprising that quite a few interested readers have asked, whether I could include OrientDB, the other prominent native multi-model database.

Multi-Model Benchmark: Assessing ArangoDB’s Versatility

Claudius Weinberger, CEO ArangoDB

TL;DR Native multi-model databases combine different data models like documents or graphs in one tool and even allow to mix them in a single query. How can this concept compete with a pure document store like MongoDB or a graph database like Neo4j? I myself and a lot of folks in the community asked that question.

So here are some benchmark results: 100k reads → competitive; 100k writes → competitive; friends-of-friends → superior; shortest-path → superior; aggregation → superior.

Feel free to comment, join the discussion on HN and contribute – it’s all on Github.

Getting Unique Values: Efficient Data Retrieval in ArangoDB

While paging through the issues in the ArangoDB issue tracker I came across issue #987, titled “Trying to get distinct document attribute values from a large collection fails”.

The issue was opened around 10 months ago when ArangoDB 2.2 was around. We improved AQL performance somewhat since then, so I was eager to see how the query would perform in ArangoDB 2.6, especially when comparing it to 2.2.

For reproduction I quickly put together some example data to run the query on:

var db = require("org/arangodb").db;

var c = db._create("test");

for (var i = 0; i < 4 * 1000 * 1000; ++i) {

c.save({ _key: "test" + i, value: (i % 100) });

}

require("internal").wal.flush(true, true);

IN List Improvements: ArangoDB Query Enhancement

Another performance improvement could be accomplished in the latest devel-branch: The handling of large IN-lists. Those become much faster than in the previous releases. Large IN-lists are normally used when comparing attribute or index values against some big array of lookup values or keys provided by the application.

Read on how this improvement reduces query execution time.

String Comparison Performance: ArangoDB Query Optimization

We’ve been using Callgrind with its powerful frontend KCachegrind for quiet some time to analyse where the hot spots can be found inside of ArangoDB. One thing always accounting for a huge chunk of the resource usage was string comparison. Yes, string comparison isn’t as cheap as one may think, but its been even a bit more than one would expect. And since much of the business of a database is string comparison, its used a lot.

ArangoDB and V8 use the ICU Library for these purposes (with no alternatives on the market) – so basically we heavily rely on the performance of the ICU library. However, one line in the ICU change-log – ‘Performance: string comparisons significantly faster’ – made us listen up.

So it was a crystal clear objective to take advantage of these performance improvements. As we use the ICU bundled with V8, we had to make sure it would work smooth for it first ;-). After enrolling the upgrade, we wanted to know whether everything was working fine with valgrind etc, and get some figures how much the actual improvement is.

(more…)

Return Value Optimization for AQL: ArangoDB Query Efficiency

While in search for further AQL query optimizations last week, we found that intermediate AQL query results were copied one time too often in some cases.

Precisely, the data that a query’s ReturnNode will return to the caller was copied into the ReturnNode’s own register. With ReturnNode’s never modifying their input data, this demanded for something that is called return-value optimization in compilers.

2.6 will now optimize away these copies in many cases, and my blog post Return Value Optimization for AQL shows performance benefits of 10-25% that can be expected due to the optimization.

Get the latest tutorials,

blog posts and news:

Thanks for subscribing! Please check your email for further instructions.

Skip to content

Skip to content