ArangoDB 2012: Exploring Additional Datafile Sizes

A while ago we wrote some blog article that explained how ArangoDB uses disk space. That article compared the disk usage of ArangoDB, CouchDB, and MongoDB for loading some particular datasets. In this post, we’ll show in more detail the disk usage of ArangoDB for insert, update, and delete operations. We’ll also compare it to CouchDB for reference.

A quick recap

The other article briefly mentioned that the actual disk space consumption of ArangoDB is partly determined by the journal size it uses. By default, ArangoDB will create and prefill journal files of 32 MB each. Minimum disk space usage therefore is approx. 2 * 32 MB (one journal file, one compactor file) per collection (each collection has its own journals).

Documents are written to an existing journal file until it is full, and then a new journal file will be created. Disk usage will therefore not grow when documents are added, modified or deleted and the journal file is not yet full. Once a journal file is filled up, ArangoDB will create a new one and allocate additional disk space. Disk usage in ArangoDB will therefore grow eventually in chunks of 32 MB. If the default journal size of 32 MB is inappropriate, it might be changed globally using the configuration setting “–database.maximal-journal-size”, or an a collection level.

MongoDB also preallocates and prefills datafiles, however, it does so with datafiles of increasing size (up to a cap of 2 GB per file), so its disk space requirements even grow when new datafiles are created.

CouchDB is different in that it does not preallocate/prefill any disk space in advance, but will allocate new disk space only when new data arrives. Datafile sizes in CouchDB therefore grow steadily and not jumpy as in ArangoDB and MongoDB.

The effect of journal sizes on disk usage

The actual disk storage requirements in ArangoDB are determined mostly by the following factors:

- the journal size used

- the number of documents in a collection

- heterogenity of document attributes (names and data types, “shapes” in ArangoDB lingo)

- whether or not there are deleted documents and automatic compaction can clean them

Although in the end the disk space needed mostly depends on the data that is loadd, the journal size is an interesting factor to play with. Journal sizes of 32 MB might not be optimal if you plan to have a lot of collections with few data. We therefore measured the effect of adjusting journal file sizes, both in terms of disk space usage and query performance. We conducted measurements with journal sizes of 32 MB (the default value), 16 MB, 8 MB, and 4 MB. The different journal sizes are named “arangod32”, “arangod16”, “arangod8”, and “arangod4” in the charts you’ll find below.

We also compared ArangoDB’s disk usage with the disk usage of CouchDB to have some reference. CouchDB’s disk usage is denoted as “couchdb” in the charts below.

The tests were done on a machine with 64 bit architecture, with the database directories all residing on the same ext4 filesystem. The versions used in the tests were ArangoDB 1.1-alpha and CouchDB 1.2.0.

The documents inserted had a size of 90-93 bytes in their JSON source format. When document updates were performed, the updated documents had a JSON source size of around 100 bytes each. All documents had an identical attribute structure, but different attribute values. All document sets fit into the available RAM of 12 GB.

All following operations were performed via the HTTP document APIs of ArangoDB and CouchDB. Individual HTTP operations were used instead of bulk operations to simulate the load of independent clients. Automatic compaction was run in ArangoDB but did not yet have an effect. Manual and automatic compaction were not used in CouchDB, neither replication or data file compression.

ArangoDB and CouchDB were used with the options “waitForSync=false” and “delayed_commits=true”, respectively. These allow the database to lazily write changes to disk so they’ll not be I/O bound.

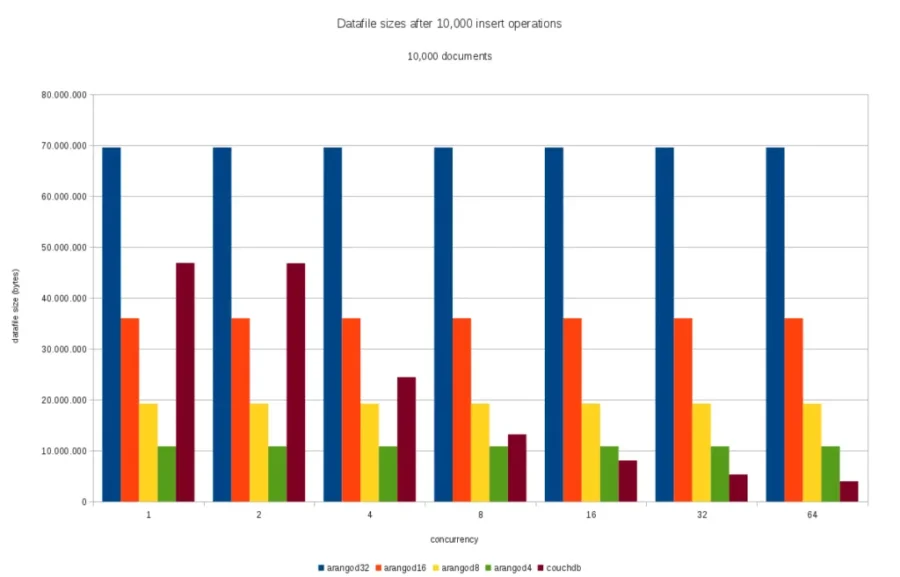

Insert operations, 10,000 documents

Measuring ArangoDB’s disk usage after 10,000 individual document insertions into a new collection shows no surprises:

- the smaller the journal file size, the less disk space will be used. This should be intuitive

- varying the concurrency of insert operations does not change disk usage. This is intuitive because the same amount of data gets written to disk, only in different order

CouchDB’s disk usage is quite different for the same workload, as it shrinks when concurrency increases. This is because CouchDB was run with the “delayed commits” option that allows it to merge multiple changes together and write them to disk in batches.

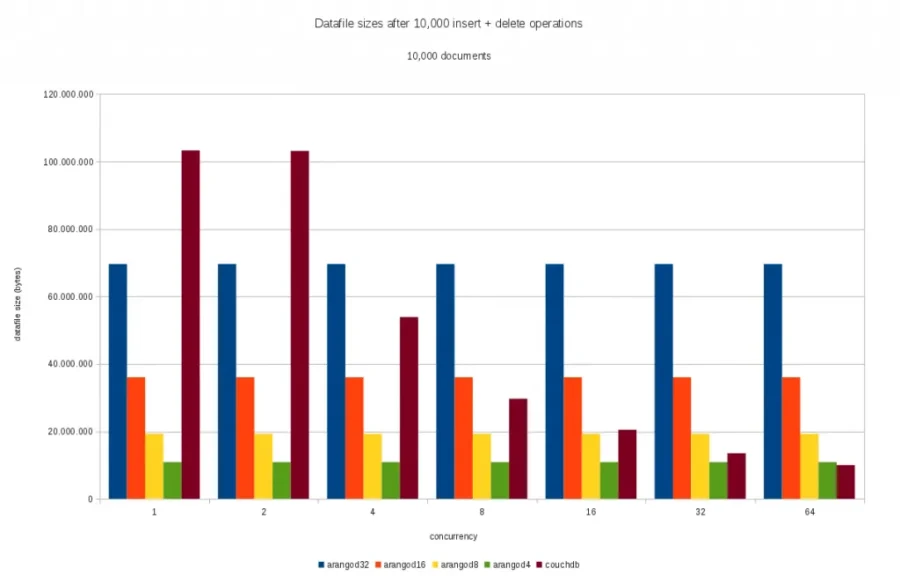

Delete operations, 10,000 documents

When performing 10,000 individual delete operations on 10,000 documents previously inserted, the total disk space used in ArangoDB is not be affected by the concurrency level. This is because the same amount of data (delete markers for each document deleted) get written to disk, only in different order.

CouchDB again makes use of the delayed commit feature to merge multiple changes and save disk space when there are concurrent writes.

Note: the datafile size in this case is determined by size of insert operations plus size of delete operations.

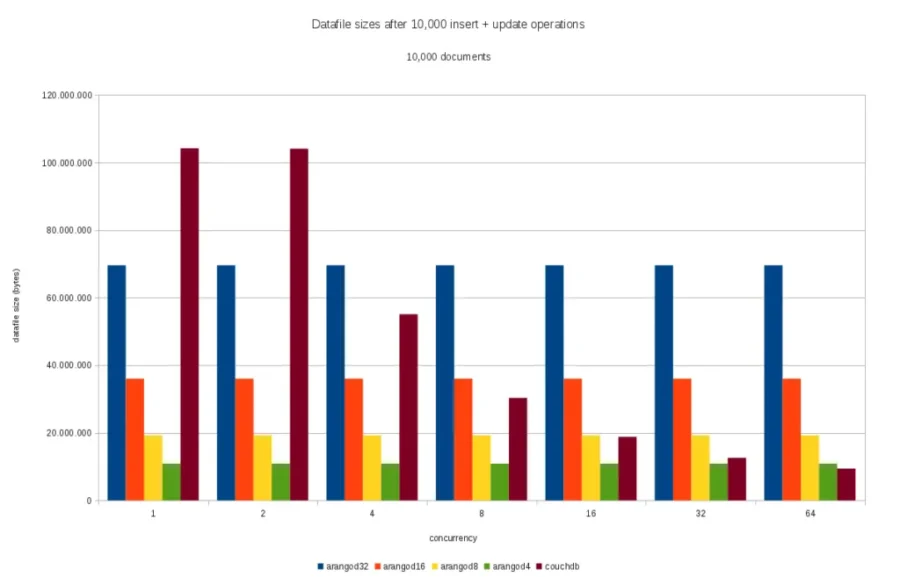

Update operations, 10,000 documents

Finally, there are update operations. For them, we can observe about the same results as for the other operations:

Note: the datafile size in this case is determined by size of insert operations plus size of update operations.

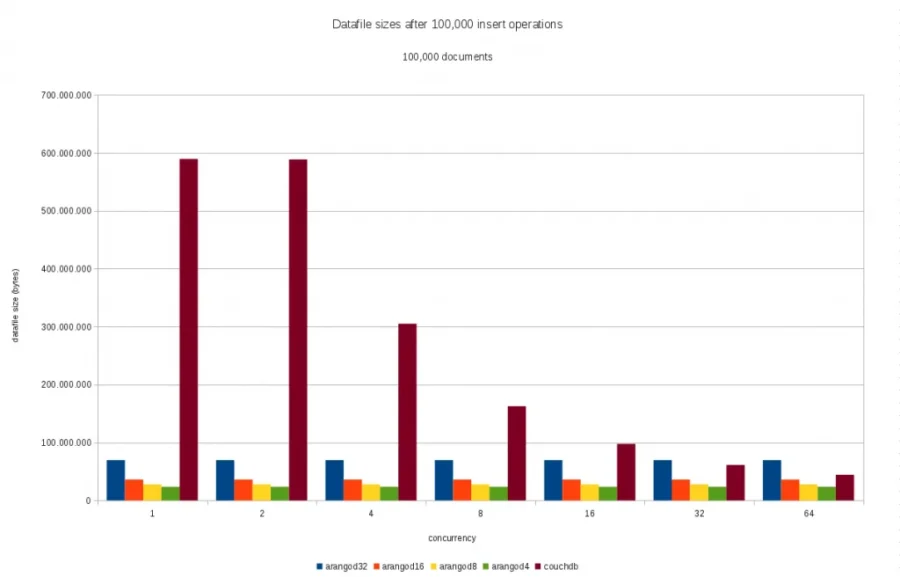

Insert operations, 100,000 documents

Increasing the number of individually inserted documents from 10,000 to 100,000 does not change the picture much for ArangoDB as the inserted data still fits into the initially created journal files.

For CouchDB, increasing the document number tenfold also increase the disk usage tenfold when there’s no concurrency. This is intuitive as each change will get written to disk individually. When concurrency increases, CouchDB again is very good at merging the changes so much less disk space is used:

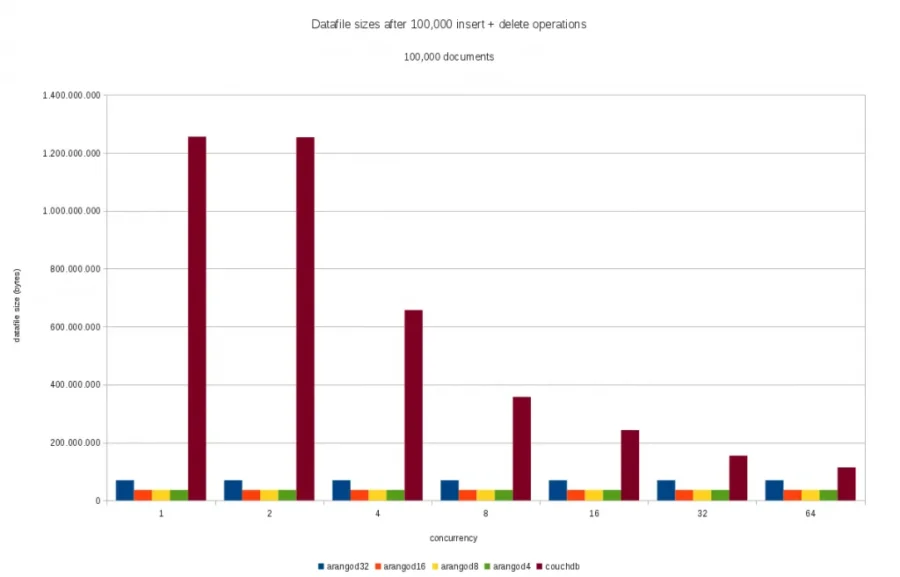

Delete operations, 100,000 documents

Creating and deleting 100,000 individual documents still made the total data fit into the initial journal files in ArangoDB for the bigger journal size, so no changes in disk usage can be observed in these cases. However, with the smaller journals, some extra journal files needed to be created as can be seen from the following picture:

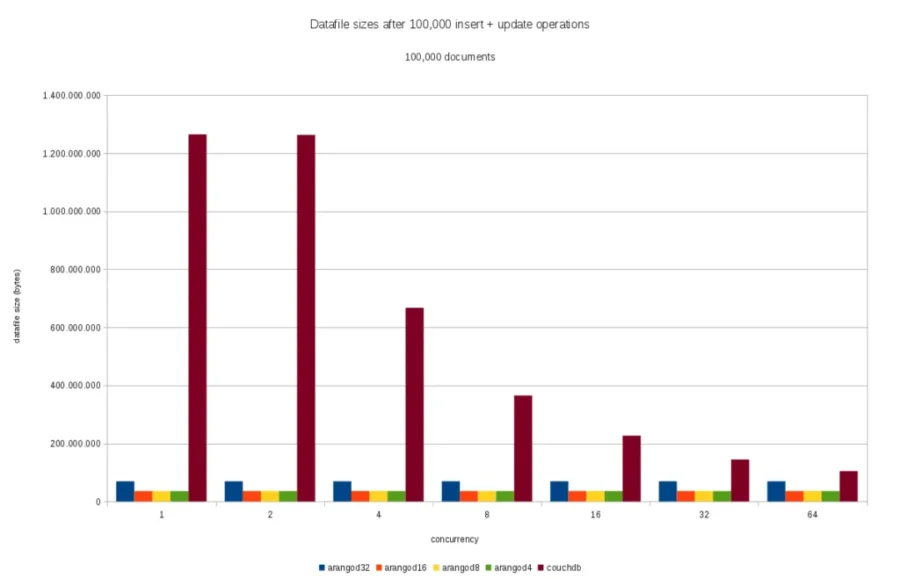

Update operations, 100,000 documents

Almost the same results can be observed when individually updating 100,000 documents that were previously created. Again, the small journals were not sufficient so extra journal files were created:

Conclusion

From the disk space point of view it might be useful to consider using adjusted journal file sizes if you plan on having not much data per collection in ArangoDB. This will save some initial disk overhead for small collections and might be worth doing if you have lots of small collections. In a follow-up post, the performance impact of various journal sizes will be examined.

As mentioned before, the journal size is not the only factor that determines disk usage. The data loaded in these tests were just some example data that produced the above results. Other data might produce different results so your mileage may vary. When you adjust the journal size on your system, please be sure to run your own tests with your own data.

Please also note that the maximum journal size configured in ArangoDB determines the maximum size of documents that can be saved. Picking a journal size that is smaller than the size of the biggest document to be inserted into a collection will not work. But the journal size can be set on collection level in ArangoDB so this should not be too much of an issue.

Get the latest tutorials, blog posts and news: